Automatic Reward Densification

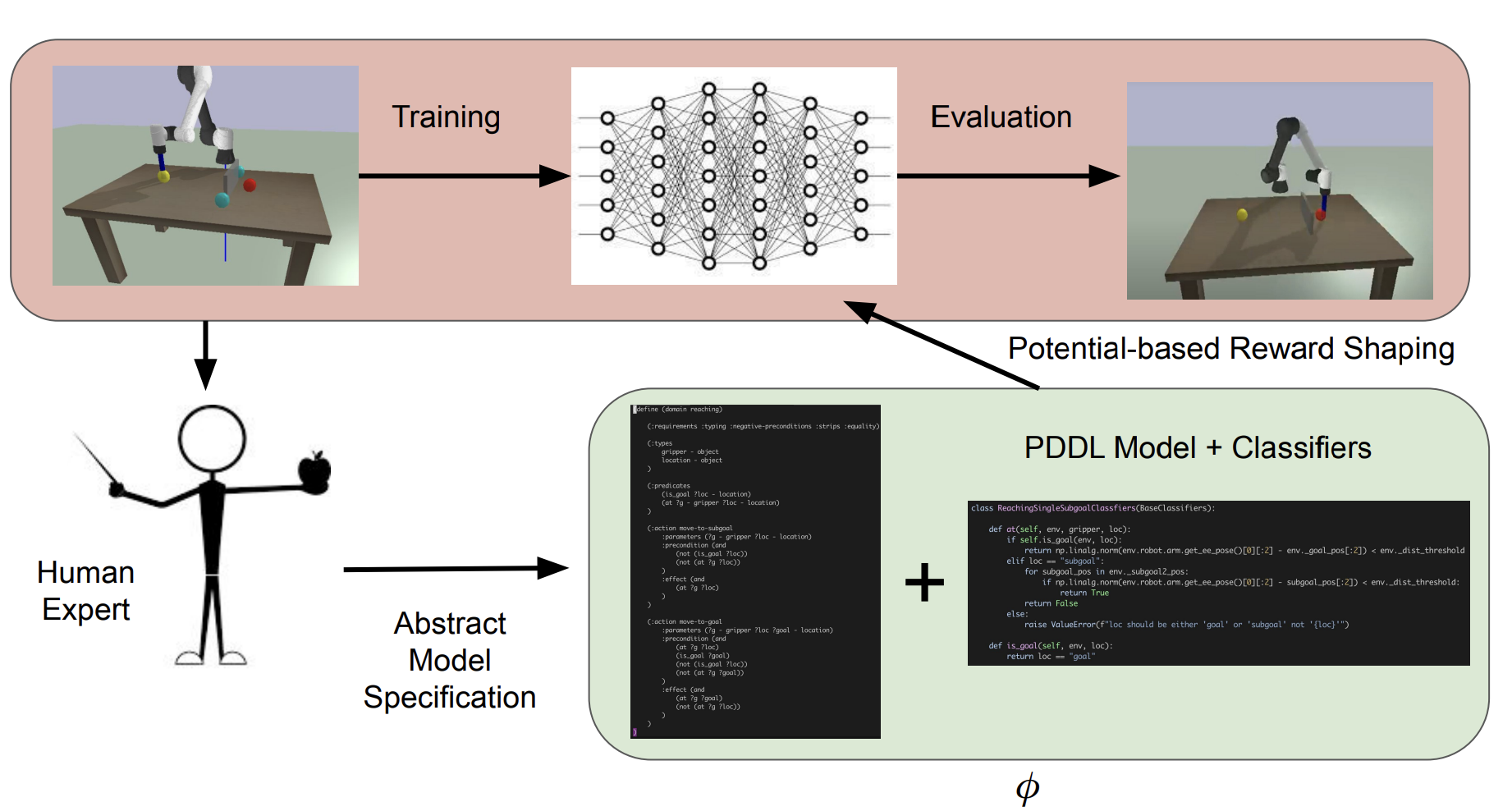

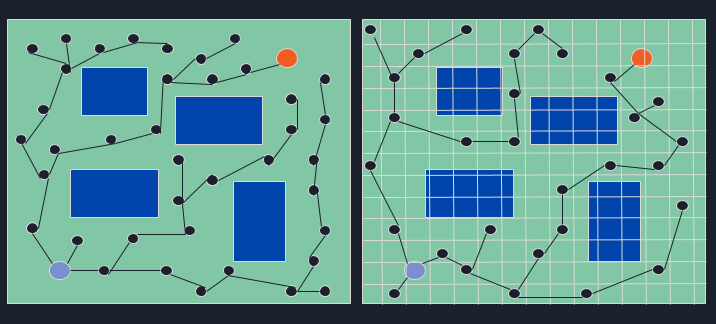

Classical planning over human-specified PDDL models, combined with potential-based shaping, densifies sparse rewards and speeds up learning across robotic tasks.

Classical planning over human-specified PDDL models, combined with potential-based shaping, densifies sparse rewards and speeds up learning across robotic tasks.

Prototype wearable combines OCR and face recognition to support blind users with reading and social identification.

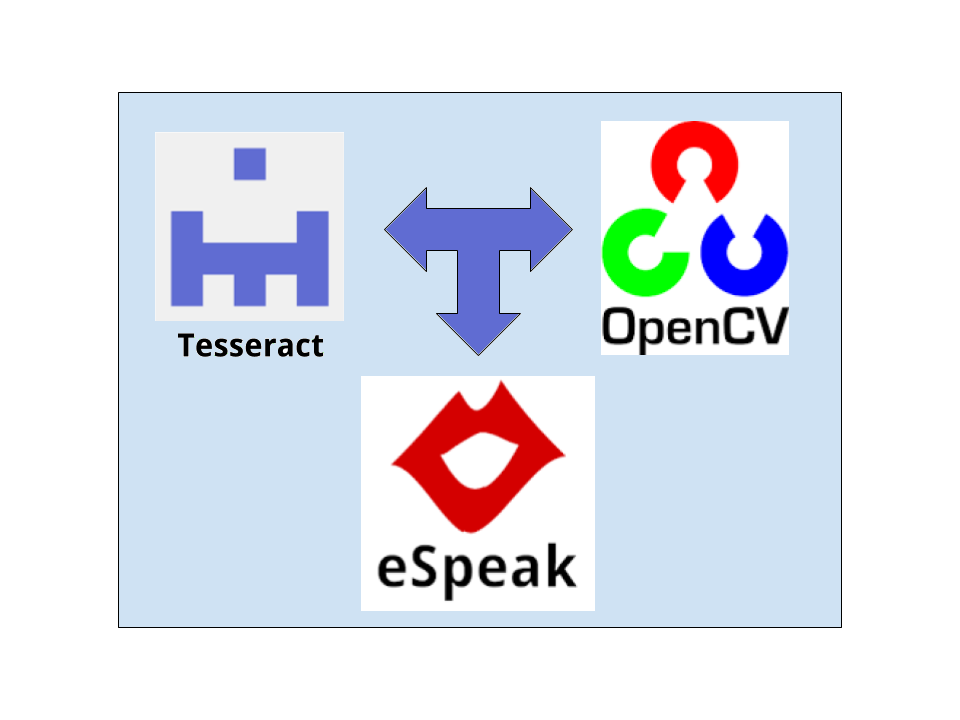

Drake-based finite-state control splits the catch into pre- and post-contact modes, combining projectile modeling with stabilized IK to stop a ping pong ball on an iiwa paddle.

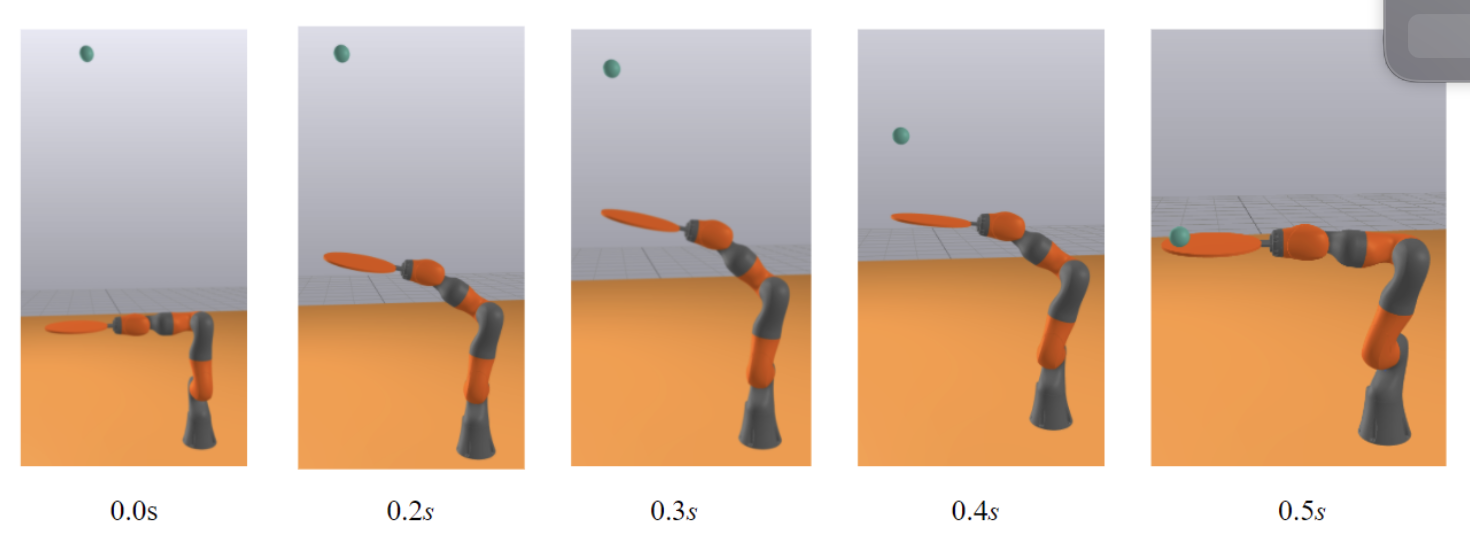

dRRT couples discrete sampling with search-based refinement, reusing computation to accelerate 5-DOF arm planning versus traditional RRT.

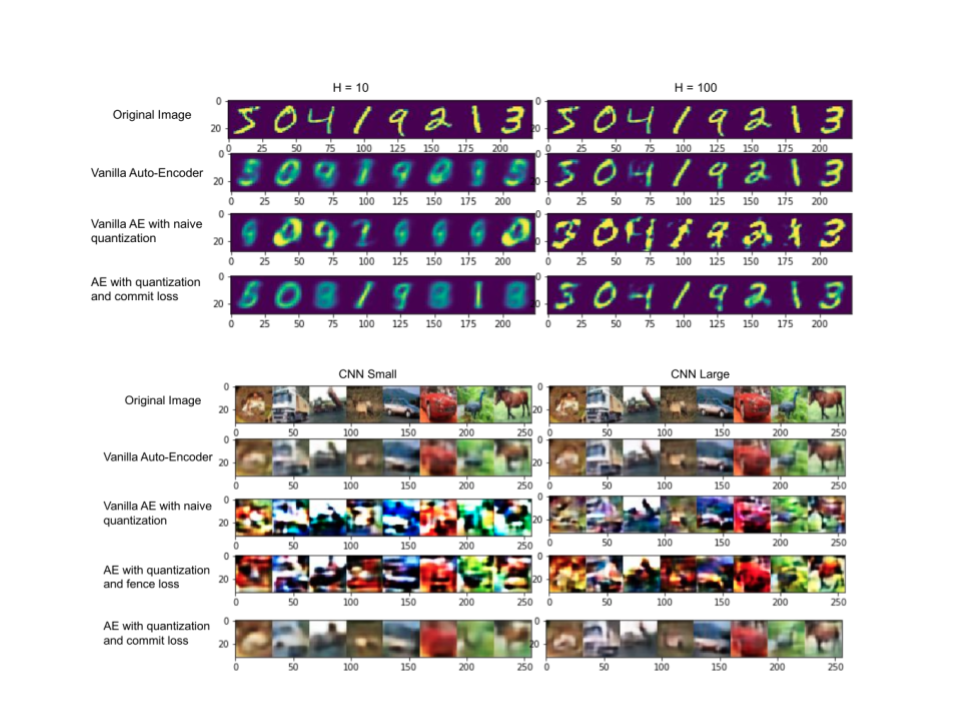

Tested quantization-aware autoencoders that couple commit loss and training-time discretization to achieve compact latent codes for images and text.

Collected simulator trajectories, trained a CNN to map images to steering commands, and demonstrated closed-loop driving in the Unreal Blocks environment.

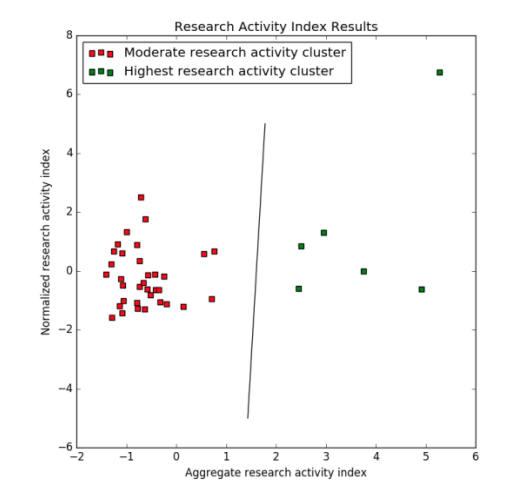

Designed quantitative criteria for research HEIs in India, applied them to top institutions, and surfaced 40 universities and 32 engineering colleges as research-focused.

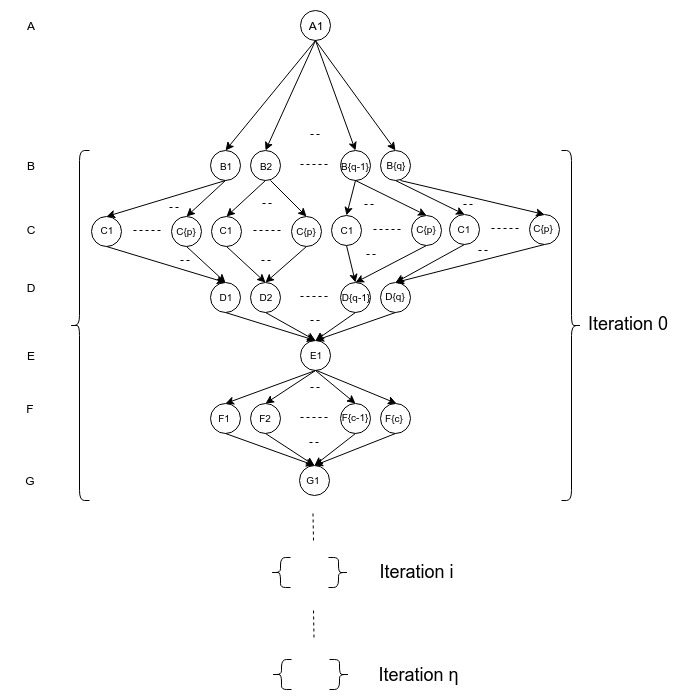

Work-efficient GPU traversal expands frontier vertices in parallel and accelerates DFS-based analytics on massive DAGs.

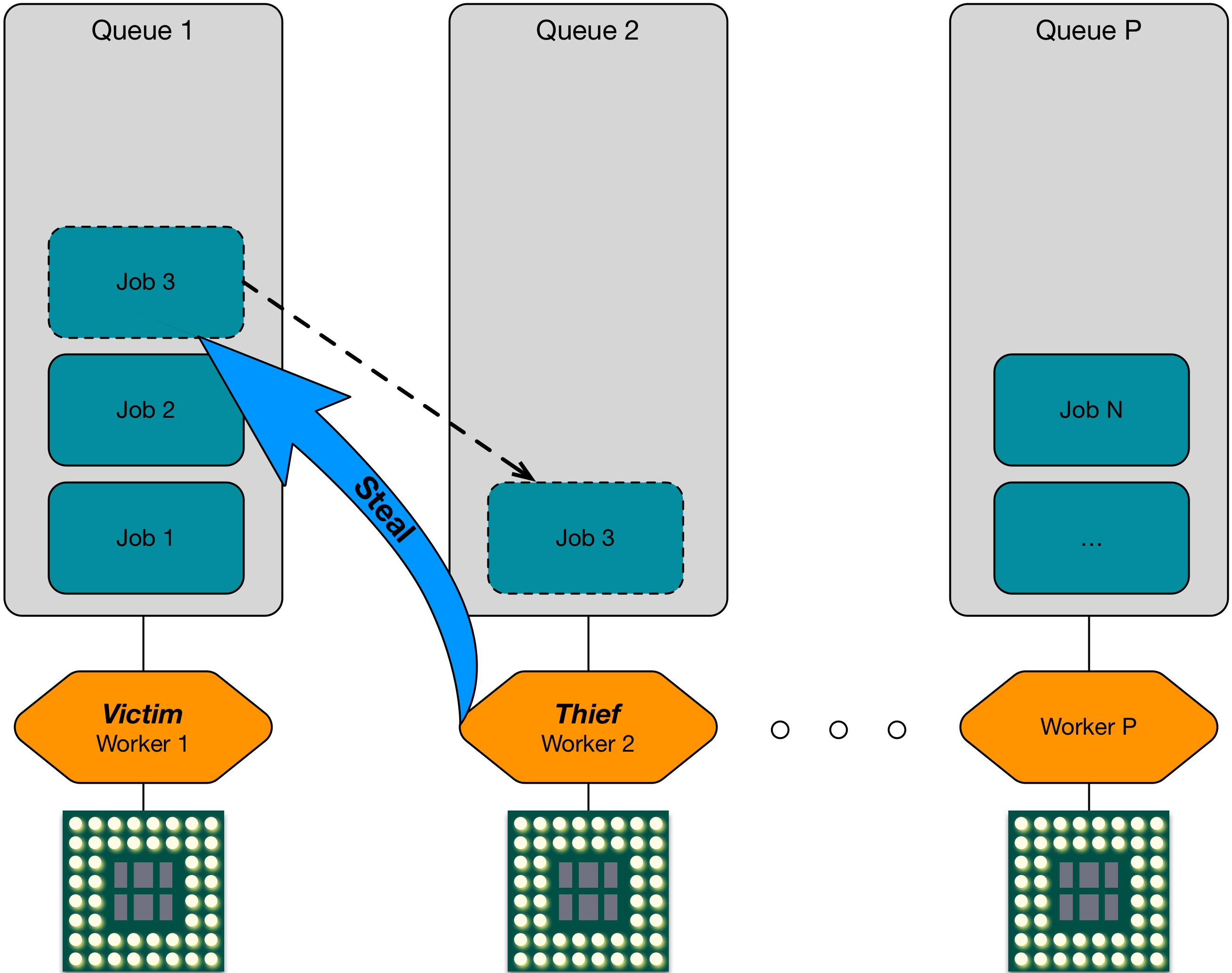

COTTON couples work stealing with CPU frequency scaling policies tuned by task heuristics, cutting energy use while preserving throughput.

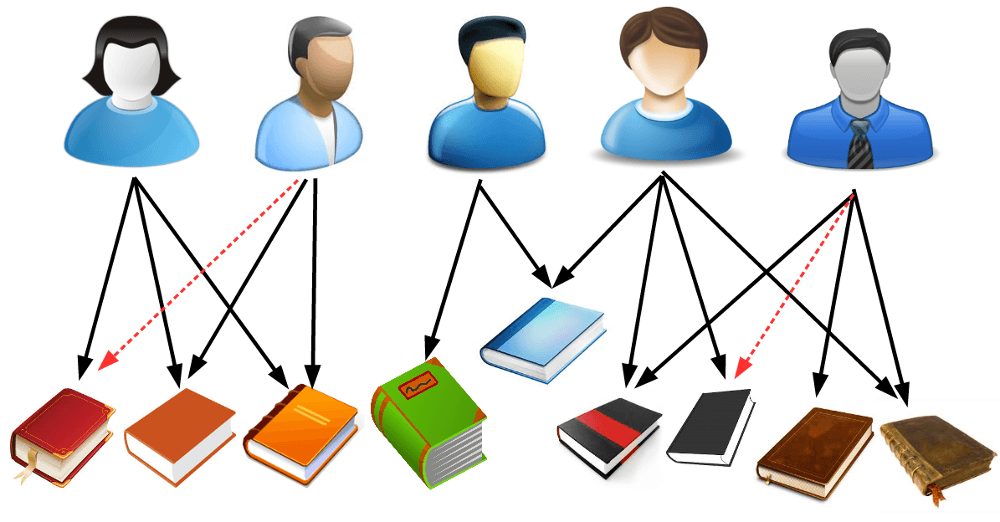

Collected contribution signals, formed confidence-weighted implicit ratings, and trained a custom autoencoder that surfaces relevant GitHub repos for contributors.