Lossy Compression using Neural Networks

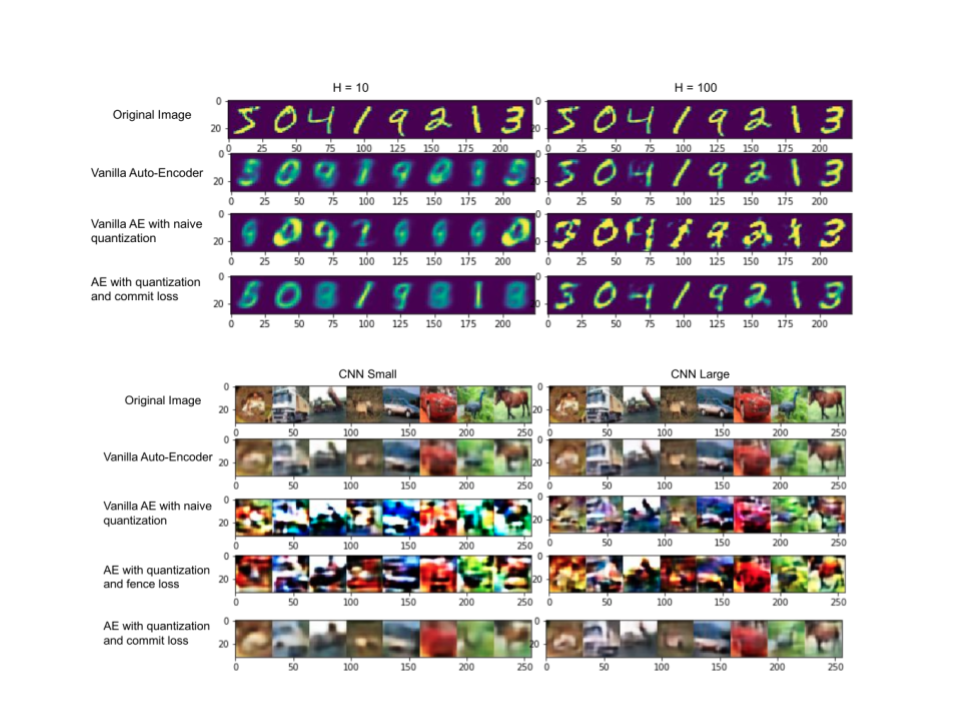

We built autoencoder models with discrete latent spaces to perform lossy compression of images and text while retaining quality comparable to continuous counterparts such as VAEs. After benchmarking naive post-hoc quantization, we incorporated quantization objectives (commit loss) directly into training and explored training-time discretization strategies. Experiments across multiple datasets show that the quantization-aware variants significantly improve compression ratios with minimal degradation in reconstructions.