Task-driven Risk-bounded Hierarchical Reinforcement Learning Based on Iterative Refinement

Published in, AAAI Spring Symposium on Human-Like Learning (Oral), 2024

Our approach incrementally tightens motion primitives with learned risk-aware priors, so safety-constrained agents can adapt planning horizons without exploding computation.

Abstract

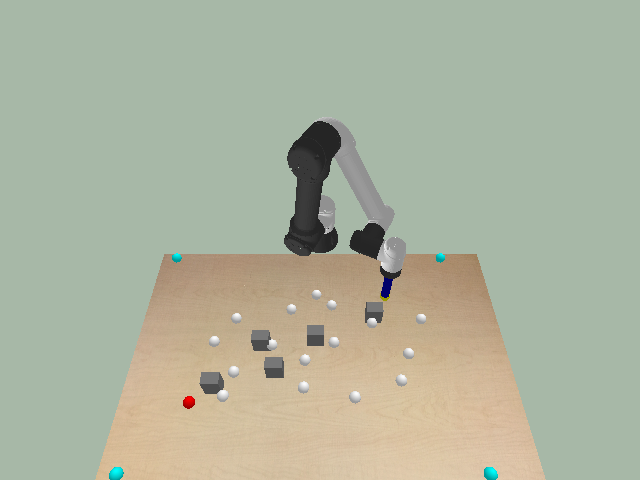

Deep Reinforcement Learning(RL) faces significant scalability challenges when dealing with complex, long-horizon tasks. This paper addresses this issue by introducing a hybrid approach that combines model-based conditional planning with RL. The proposed approach incorporates a novel iterative primitive refinement technique that strategically biases computational effort to accelerate policy improvement, which is particularly beneficial in risk-bounded and time-critical domains. In addition, we explore a comprehensive range of prioritization methods and conduct a thorough analysis of their strengths and weaknesses. To demonstrate the scalability of the proposed approach, we evaluate it in both 2D and 3D robot manipulation environments with relevant baseline methods, while empirically examining the performance of the prioritization methods.

BibTeX

@inproceedings{parimi2024task,

title={Task-driven Risk-bounded Hierarchical Reinforcement Learning Based on Iterative Refinement},

author={Parimi, Viraj and Hong, Sungkweon and Williams, Brian},

booktitle={Proceedings of the AAAI Symposium Series},

volume={3},

number={1},

pages={573--575},

year={2024}

}